Change Management Metrics: Measuring Impact, Not Activity

The change management dashboard showed green across every metric. Training completion: 94%. Communication reach: 100%. Stakeholder engagement score: 4.2 out of 5.

Six months later, an internal audit found that 40% of employees had quietly reverted to the old process.

The dashboard was measuring activity. The organization needed to measure change.

This gap between change management metrics and actual organizational change is where most initiatives declare false victory. The numbers satisfied the executive sponsor. The key performance indicators (KPIs) hit their targets. And the people who were supposed to change went back to doing what they had always done.

The problem is not that organizations fail to measure. The problem is what they choose to track. Most change management measurement systems are built around data that is easy to collect rather than data that predicts whether change will sustain. What follows is a framework for distinguishing the metrics that matter from the ones that simply look good on a slide.

Key Takeaways

- Most change dashboards track activity—training completion, communication reach, survey scores—none of which predict whether behavior actually changed or will sustain.

- Leading indicators like sponsor behavior consistency, resistance quality, and voluntary adoption predict sustainability far more reliably than any lagging metric on a dashboard.

- Capability metrics—whether people can perform independently, coach each other, and solve new problems using the new approach—distinguish organizations that sustain change from those that revert.

- The real ROI of change management is not what you gain but what you avoid: the compounding cost of repeated failed attempts that erode trust and make every next initiative harder to launch.

The Measurement Problem: Activity vs. Impact

Most change management measurement systems track activity rather than impact. Training completion, communication reach, and employee survey scores confirm that work happened and people participated. They do not predict whether behavior actually changed or whether the change will sustain beyond the project timeline.

Organizations that report the highest change management scores often show the widest gap between dashboard results and behavioral reality. The metrics were designed to show progress, so they show progress, regardless of what is actually happening.

Three measurement traps explain why so many change initiatives report success while delivering mediocrity.

Vanity metrics produce numbers that satisfy sponsors without predicting outcomes. Training completion rates are the most common offender. A 95% completion rate with 30% behavior change means 65% of the training investment produced no measurable outcome. The metric tracked attendance. It said nothing about whether people learned anything, changed how they work, or can perform independently without the training scaffolding.

Activity metrics count effort instead of impact. Communication touchpoints measure how many times people heard the message. They do not measure whether anyone understood what the change means for their daily work or whether comprehension led to action. Pulse surveys sent, town halls held, emails delivered: all activity. None of it tells you whether the change project is producing results.

Sentiment metrics capture feelings without connecting them to behavior. Employee engagement surveys produce a number. That number reflects how people felt on a particular day. It does not capture whether positive sentiment translates to new ways of working. People can feel good about a change initiative and still not adopt it. Sentiment and behavior are correlated, but the correlation is weaker than most dashboards assume.

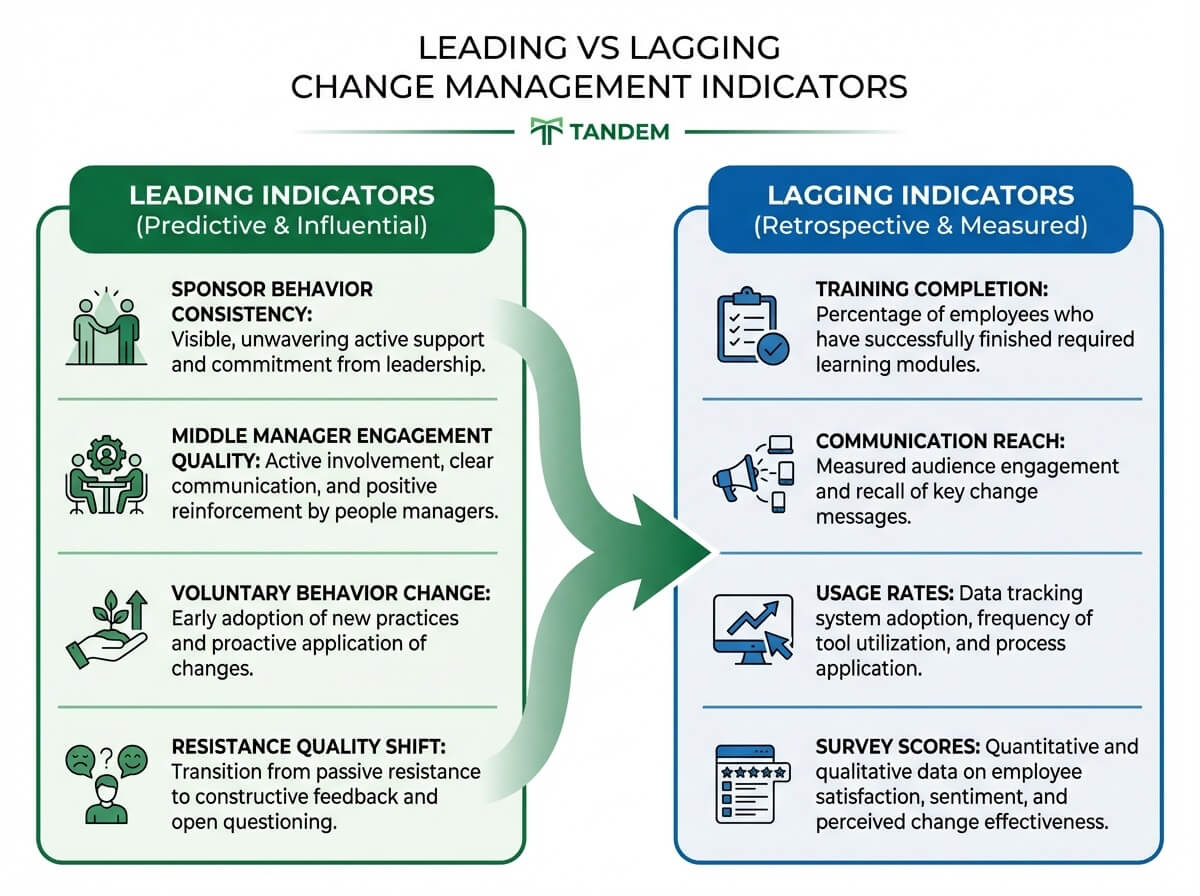

The alternative is building a measurement system that distinguishes leading from lagging indicators and tracks capability development alongside adoption.

Leading vs. Lagging Indicators for Change

Most organizations track lagging indicators exclusively. These confirm what already happened: training completion rates, communication reach statistics, system usage rates, survey satisfaction scores, project milestone completion. Lagging indicators are useful for reporting. They tell you almost nothing about what will happen next.

Make “Why”–to–“How” Happen Faster

Coaching equips sponsors and managers to handle pushback, improve resistance quality, and reduce workarounds before reversion becomes the norm.

A change management strategy built around leading indicators shifts the measurement focus from confirmation to prediction. Leading indicators surface the early signals that determine whether change will sustain or revert once the project team moves on.

Four leading indicators have consistently predicted sustainability across change initiatives:

Sponsor behavior consistency. Whether the sponsor’s actions match the sponsor’s communications. The strongest leading indicator we have observed in practice: whether the executive sponsor can articulate the change rationale without slides. If the sponsor needs the deck, the sponsor has not internalized the why. When that happens, every message the sponsor delivers rings hollow to the people being asked to change.

If the sponsor needs the slide deck to explain the change, the sponsor has not internalized it. And neither will anyone else.

Middle manager engagement quality. Not attendance at change briefings, but the quality of questions managers ask afterward. When middle managers ask “how” questions, adoption is likely. When they ask “whether” questions, resistance is active. The type of question predicts the trajectory more reliably than any survey score.

Voluntary behavior change. People doing it when no one is watching. Compliance is observable. Adoption is what happens when the project team is not in the room. This distinction matters because process implementation can force compliance through controls and oversight. Adoption requires something controls cannot produce: genuine understanding of why the new approach is better.

Resistance quality. Not resistance quantity. Early resistance sounds like “why are we doing this?” Productive resistance sounds like “how do we handle the exception cases?” The shift from why to how signals genuine engagement with the change. Organizations that track resistance as a binary (present or absent) miss the most informative data point: the nature of the pushback is shifting from emotional to practical.

Leading indicators require more effort to collect than lagging ones. They cannot be automated into a dashboard without losing the signal. But they predict what lagging indicators can only confirm after the fact.

Capability Metrics That Predict Sustainability

After supporting organizations through change over many years, a pattern emerges: the organizations that sustain change are not measuring different things. They are measuring different dimensions. Adoption is one dimension. Capability is another. Most change dashboards track only the first.

Capability metrics measure whether people can perform independently, not just whether they started using the new system. Three categories of capability indicators distinguish organizations that sustain change from those that revert.

Leader readiness indicators. Can sponsors hold the difficult conversations that change demands? Not “have they been trained on messaging” but “can they respond when someone pushes back in a meeting?” Are middle managers translating the corporate narrative into team-relevant meaning, or are they forwarding slide decks? Is leadership behavior consistent with stated change priorities, or do people see one message and experience another? These indicators overlap with measuring leadership development because change leadership is a development outcome, not a communication task.

Team adaptation indicators. Are teams solving new problems using the new approach, or just following new procedures when the old problems recur? Is knowledge transfer happening peer-to-peer? When people start coaching each other through the change without being asked, capability has transferred. The peer-teaching shift is one of the most reliable signals. Workarounds decreasing over time is the real adoption signal. When employees find creative shortcuts around the new process, adoption has stalled regardless of what the usage dashboard shows.

Organizational resilience indicators. How quickly does the organization recover from implementation setbacks? Is change fatigue increasing or stabilizing? Are people raising issues proactively? That last indicator is a psychological safety signal. When people stop reporting problems, it does not mean the problems disappeared. It means people stopped believing that reporting would lead to action.

Capability metrics require observation, not just surveys. You cannot measure whether a sponsor can hold a difficult conversation through a questionnaire. That is where coaching interventions become a data collection channel, not just an intervention. Coaching conversations surface the resistance patterns, readiness signals, and capability gaps that no dashboard captures.

The ROI Question: Honest Measurement

Most change management ROI calculations divide cost of initiative by cost of not changing. The numbers are invented. The assumptions are heroic. Everyone in the room knows the spreadsheet is theater. Prosci research on change management measurement provides benchmarks, but even the best frameworks struggle when organizations plug in imaginary savings estimates and call them projections.

A more honest approach measures what you avoid, not what you gain.

The actual cost of failed change is not the initiative. It is the organizational scar tissue that makes the next attempt harder before it even starts.

Cost of reversion. The actual cost of failed change is not the initiative itself. It is the second attempt. And the third. Each failed change erodes organizational trust, increases resistance to the next change, and makes every subsequent initiative harder to launch. The ROI of getting change right the first time includes avoiding the compounding cost of getting it wrong repeatedly. Organizations that track reversion rates discover that the business benefits of sustained change are orders of magnitude larger than the initial business case suggested.

Time to productivity. How quickly do people reach competence in the new way of working? Not “when did they log in to the new system” but “when did their performance in the new process match their performance in the old one?” Coached change leaders typically see faster time to productivity because barriers are identified and addressed before they calcify into workarounds.

Talent retention. What rarely appears on ROI spreadsheets: the people who leave because they were asked to change without being supported through it. Replacement costs for experienced employees run 50-200% of annual salary. Customer satisfaction drops when experienced people leave and new hires learn on the job. These costs are real, measurable, and almost never attributed to poorly managed change. For organizations that invest in executive coaching during transitions, retention data often provides the clearest ROI signal.

None of these metrics require invented numbers. They require tracking what actually happened to people and performance over time.

Building a Measurement System

Standard change management frameworks solve the lagging indicator problem well. ADKAR offers individual-level progress tracking across awareness, desire, knowledge, ability, and reinforcement. Prosci benchmarking provides industry comparison data. Survey instruments capture sentiment snapshots. Where every standard framework falls short: tracking whether leaders are developing the capability to lead future changes, not just this one.

That is the gap between a change measurement system and a change capability measurement system. Practical guidance for building a system that measures both:

- Start with three leading indicators, not ten lagging ones. Pick the three from the leading indicator list above that match your change type. Technology changes prioritize voluntary usage and workaround reduction. Cultural changes prioritize sponsor behavior consistency and resistance quality. Strategic alignment changes prioritize middle manager translation quality.

- Measure behavior in context, not in training environments. People perform differently when observed during training than when working under real conditions. The measurement that matters happens at the desk, in the meeting, during the customer call.

- Track resistance quality over time. Set up a simple tracking mechanism for the nature of questions and objections surfacing in team meetings. The shift from “why” to “how” is the most reliable early signal that adoption will follow.

- Build qualitative assessment alongside quantitative dashboards. Coaching conversations, skip-level interviews, and structured observation provide the signal that surveys miss. The goal is not to replace data with anecdote but to ensure the data you collect includes the human dimension that predicts sustainability.

A measurement system that tracks capability development alongside adoption tells you not just whether this change is working, but whether your organization is becoming better at change itself.

The Real Test

The test of a change management measurement system is not whether the dashboard is green. It is whether the dashboard would predict what an internal audit finds six months later. If the dashboard says the change succeeded and the audit reveals reversion, the measurement system failed before the change did.

Before building your next measurement framework, ask one question: are you tracking what happened, or are you tracking whether it will sustain?

The answer reveals whether you are measuring change management activity or change management impact. The organizations that sustain change have learned the difference. Their dashboards may look less impressive on any given day, but their results hold up to scrutiny over time.

Build Metrics That Predict Sustained Change

Let’s map your leading indicators, capability signals, and reversion risks—so you measure change, not just activity.

Book a Free Consultation →