AI in Your Coaching Practice: A Guide for Coaches in Training

In every cohort I work with right now, AI is the topic that nobody scheduled and everybody brings. A new coach raises a hand on day three of training and asks, “Should I be using AI in my practice?” The room goes still. They want a single answer. There isn’t one.

The question they are asking is the wrong one. The right one is harder, and it sits underneath: what kind of coach do you want to become, and which uses of AI move you toward that — or away from it?

That harder question is the one I want to walk through with you here. Not because I have a tidy framework that will solve your dilemmas, but because the field itself doesn’t have one yet. Look at what landed in coaching publications in the past two weeks. ICF Global asked whether AI can help in human-centered work. Chief Learning Officer Magazine argued that AI is only as good as the standard you set. Coaching at Work published two pieces on the same week — one critical, one warning about the cost of automating intimacy. Four respected voices, three quite different positions, no consensus.

If you are in training right now, you do not get to wait for the field to settle this. You are about to step into client work, and your first hundred client hours will be shaped by what you decide AI is for — and what it is not for.

Key Takeaways

- The field is divided on AI in coaching. New coaches cannot borrow a position; they have to reason their own way to one.

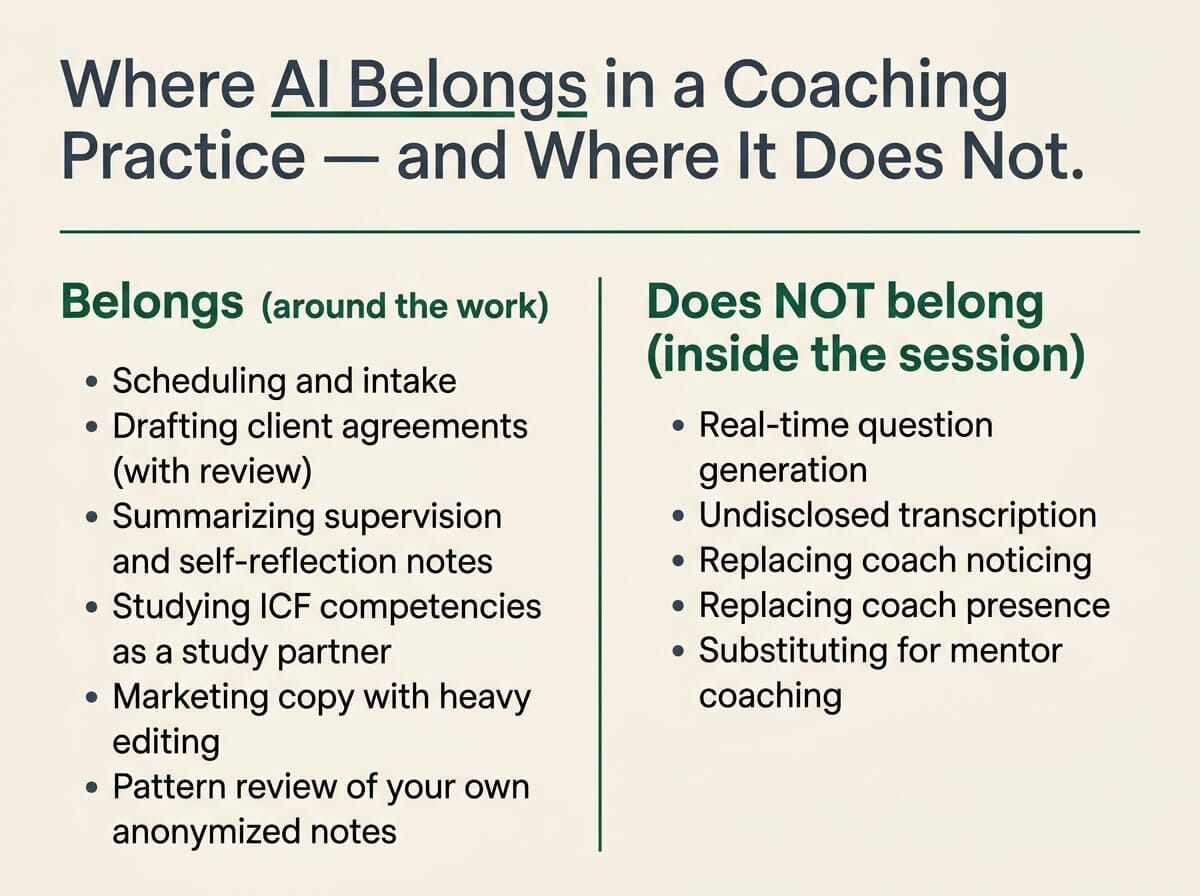

- Inside the contracted coaching hour, AI does not belong as a participant. Presence is the work the credential exists to certify.

- AI legitimately accelerates the practice around the practice: scheduling, drafting, study, marketing — never the live session itself.

- A written, five-question personal AI policy clarifies the lines you will not cross before a client ever asks you to cross them.

What the Field Is Saying — and Why It Is Confusing

Read the four pieces side by side and you can hear four different postures inside our profession.

ICF Global takes the institutional voice. The headline asks the question rather than answers it: coaching is human-centered, can AI help? You can hear the duty of care — an organization that credentials more than fifty thousand coaches cannot afford to be careless on this. They are leaning forward, but they are also leaning carefully.

Chief Learning Officer Magazine takes the buyer’s voice. AI is as good as the standard you set. That is a learning-and-development executive talking to other learning-and-development executives. The message is about discipline. If you bring careless inputs and accept careless outputs, you get careless results. If you bring high standards, you get tools that earn their keep.

Then Coaching at Work twice in the same week. One piece titled SAGE Against the Machine — the practitioner-skeptical voice, the one that watches a tool and asks who it serves. A second piece titled Automating Intimacy — the boundary-watcher, the one who notices when something private is being industrialized and refuses to let it pass without comment.

Notice what is happening. The institutional voice is curious. The buyer’s voice is pragmatic. The practitioner’s voice is skeptical. The boundary voice is alarmed. These are four serious people in our field looking at the same technology and arriving in four different places. That is the part you have to take in. If thoughtful coaches and educators disagree, you cannot just adopt one of their positions and call it your view. You have to do the discernment yourself.

Where AI Does Not Belong: Inside the Coaching Session

I will start with the strongest claim and stand on it. Inside the contracted coaching hour, AI does not belong as a participant.

Worried AI Will Become a Crutch in Session?

Let’s map what stays human—presence, silence, noticing—and what can live outside the hour without eroding your craft.

Three concrete things follow from that.

One: not as an undisclosed transcriber. If something is recording the conversation, the client knows about it, has consented to it in the agreement, and knows where the recording goes. No silent listeners.

Two: not as a real-time question generator. The session is not a place to glance at an AI window between client breaths and copy a question. The pause you take to find the right question is part of the work the client is paying for. It is also part of the work you are training to do.

Three: not as a co-pilot whispering hypotheses into your ear. Your hypotheses about what is happening for the client are formed by your own noticing. If a model is forming them for you, you are not building the muscle the credential exists to certify.

If you outsource your noticing in the moment, you are not learning to coach. You are learning to operate a machine that does something coach-shaped.

The reason this line matters is not technological. It is competency-based. Coaching presence is the ICF competency that takes the longest to develop. It is built through the discomfort of sitting with a client and not rescuing yourself with input from elsewhere. It is built through silence you have to tolerate before the right question shows up. If you fill that silence with a tool, you will pass through your training never having developed presence.

The same goes for active listening. Active listening is not transcription. It is the felt-sense work of catching what the client is not saying, the small shifts in language, the moment a story changes shape. A model can transcribe; it cannot listen. If you let it pretend to do your listening for you, you will not notice that you have stopped listening.

The session is also where informed consent and confidentiality live. Whatever your view on AI tools, the client’s data and words are theirs. Bringing AI into the room without explicit, plain-English consent is a Code of Ethics problem, not a preference problem.

Where AI Does Belong: The Practice Around the Practice

Now let me say what I am not saying. I am not saying AI has no place in your coaching life. I am saying it has no place inside the session. Outside the session is a different conversation.

Almost everything that surrounds the work but is not the work is fair game, with discipline.

- Scheduling and intake logistics. Booking, reminders, calendar conflicts, intake form reviews. The administrative tax of running a practice is real, and there is no competency development being lost when you let a tool absorb it.

- Drafting client agreements and proposals. Use a model to produce a first draft, then edit hard. Do not send a coaching agreement you have not read line by line and made your own.

- Translating supervision and self-reflection notes into goals. After a session, you write your own notes (always). A model can help you turn a stack of post-session notes into patterns you want to bring to your next supervision conversation.

- Studying ICF competencies and markers. A model is a useful study partner for unpacking the competencies, working through scenarios, or testing your understanding of the markers. It is not a substitute for mentor coaching, ever.

- Marketing copy for your practice. First drafts of website copy, newsletter intros, social posts. Edit heavily, in your own voice. If your marketing sounds like everyone else’s marketing, you have a problem you cannot solve with a different prompt.

- Pattern review of your own anonymized notes. With proper de-identification and your own ethics review, a model can help you see patterns across thirty sessions you would not otherwise spot. This is reflection, not the work itself.

The line is the same in every case. The work itself — the contracted session — is yours. The infrastructure around the work is a fair place to use leverage. If a use of AI moves you out of the session and into the support layer, treat it as a candidate. If it moves into the session, the answer is no.

The Standard You Set Becomes the Standard You Get

Take CLO Magazine’s point seriously and translate it for the coach in training. AI output reflects the standards encoded in your prompt and the discernment you bring to the result. The risk for a new coach is not that AI is bad. The risk is that you do not yet have the trained eye to spot when its output is generic, off-competency, or quietly wrong.

Picture this. A new coach asks a model for “ten powerful coaching questions for a client struggling with delegation.” The model produces a list. Several of the questions are leading. A couple are advice dressed up with a question mark. One assumes the coach knows the client’s motivation better than the client does. To a coach mid-PCC, those problems jump off the page. To a coach in week six of ACC training, the list looks fine.

The development order matters. Train your own discernment first. Bring the tool in second. If you cannot critique a coaching question on your own, you cannot critique an AI-generated coaching question. You can only adopt or reject it on instinct, and that is a thin substitute for skill.

This is not me being precious about technology. It is the same principle the ICF Core Competencies have always pointed to. You build coaching mindset and embodied presence first. You build the muscle of asking your own question, being willing to live with silence, sitting in not-knowing. Once those are real, the tool becomes a useful sparring partner. Before they are real, the tool is a crutch that disguises the missing skill.

If you are tempted to argue with this, ask yourself a different question. The coaches you most admire — the ones whose presence you feel even on a Zoom call — how did they get there? Not by outsourcing their noticing. They got there by doing the slow work of learning to be with another person under pressure. The tool will not change that path. It will only change how easy it is to fool yourself about being on it.

The Automating Intimacy Question

Coaching at Work’s phrase is the one I keep coming back to. Automating intimacy. It names something you can feel even before you can argue for it.

Coaching is a relational craft. Something happens between two humans — the steady gaze, the patience, the willingness to sit with a difficult feeling and not fix it — that another conversation cannot reproduce. A model can produce coherent words at coaching-shaped moments. It cannot produce the experience of being met.

For the new coach, this matters in two directions at once. It matters for your client, obviously: they came to you because they wanted to be heard by another person, not by a system. It also matters for you. Carrying the relational weight of a coaching conversation is uncomfortable in ways that build you. If you let a tool carry that weight, you do not learn to carry it. You graduate the program with a credential and not the capacity it was meant to certify.

Protect the relational core of your practice fiercely. Not out of nostalgia. Out of professional self-respect.

A Practical AI Policy for a Coach in Training

Here is what to do with all of this. Write a personal AI policy. One page. Before your next client conversation, ideally.

It does not need to be elegant. It needs to be yours. The reason to write it down is that the lines you will not cross are easier to hold when you decided them in a quiet moment than when a client casually asks if it’s OK to record the call for transcription. Decisions made in advance are decisions you keep. Decisions made under social pressure are decisions you regret.

Five questions to answer.

Five Questions for Your Personal AI Policy

- What will I never use AI for in my practice? Name the lines explicitly. Mine include the live session, in-session question generation, and any use that would compromise client confidentiality.

- Where will I use AI, and what is my disclosure standard? If you use AI in any client-facing way — intake summaries, follow-up notes, scheduling — what will you tell clients, and where? In the agreement is a good place.

- What does informed consent look like when AI touches a client’s data? If a tool processes client words, what consent language do you use? What can the client opt out of? Where does the data go and for how long?

- How will I keep AI out of the moment-of-contact in a session? Concrete logistics. Tabs closed. Phone face-down. Any AI tools fully offline before the start of the session.

- How will I revisit this policy as I move from ACC to PCC to MCC? Set a calendar reminder for one year out, and another at every credential transition. The lines that make sense at ACC may shift, in either direction, as your practice matures.

Two notes on how to use this. First, ground the policy in the ICF Code of Ethics and your reading of ethical practice and ICF Competency 1. Your policy is not a personal preference document. It is a professional standards document. Second, when you write the “disclosure” section, anchor it in the coaching agreement. The agreement is where confidentiality, scope, and now AI use should live in plain language.

You will revise this policy. That is the point. Writing it now means you have something to revise.

What Your First Hundred Hours Will Be Made Of

Step back from the technology debate for a moment. The coaches I have watched develop into MCCs over the past decade are not the loudest AI adopters or the loudest AI refusers. They are the ones who decided early what their practice stands for and let the tools serve that. Their decisions are quiet. Their boundaries are clear.

Your first hundred client hours are the most formative hours of your career. You will never get them back, and the habits you build in them will shape every hour after. If those hours are made of careful noticing, honest silences, and questions that surprised even you — you will become a coach. If they are made of glancing at a screen for the next prompt, you will become something else.

If you are exploring the ICF certification path, take a quiet hour to sit with what kind of coach you want your future clients to remember. Then write the policy. Then go back to the work.

Frequently Asked Questions

Should I tell my client I use AI?

Yes, in writing, in the coaching agreement. Be specific about what AI touches and what it does not. “I use AI to draft my newsletter and to organize scheduling logistics. I do not use AI inside our sessions, and I do not feed your session content to any AI tool.” Plain English. No legal hedging.

Is it OK to record sessions for AI to summarize?

Only with explicit, advance, opt-in consent from the client, and only if you have a defensible answer to: where does the recording live, who can access it, how long is it retained, and how does the client revoke consent. If any of those answers is unclear, the answer is no.

Can AI help me prepare for ICF assessments?

As a study partner, yes. As a substitute for mentor coaching, no. The mentor relationship is what builds the embodied competence the assessment is testing for. A model can help you understand the markers; it cannot help you embody them.

Will AI replace coaches?

It will replace coaches who are doing things that did not need a human in the first place. It will not replace coaches who are doing the work coaching is actually for — the relational, presence-based, accountability work that only happens between two people who showed up. The question is not whether AI replaces coaches. It is whether you are doing the work that cannot be replaced.

Turn Your AI Boundaries Into a Clear Practice Policy

Book a free consultation to talk through consent, confidentiality, and what you’ll automate (and what you won’t) before your next client hour.

Book a Free Consultation →