The Hundred Dollar Trap: Why the Safe Choice Is the Dangerous One

Would you take $100 right now, or a 10% chance at $1,000?

Same expected value. Most people take the guaranteed money. Kahneman and Tversky spent decades explaining why, and the explanation earned a Nobel Prize. Humans overweight losses. A loss feels 2.25 times as bad as an equivalent gain feels good. When you can take the safe option, you take it.

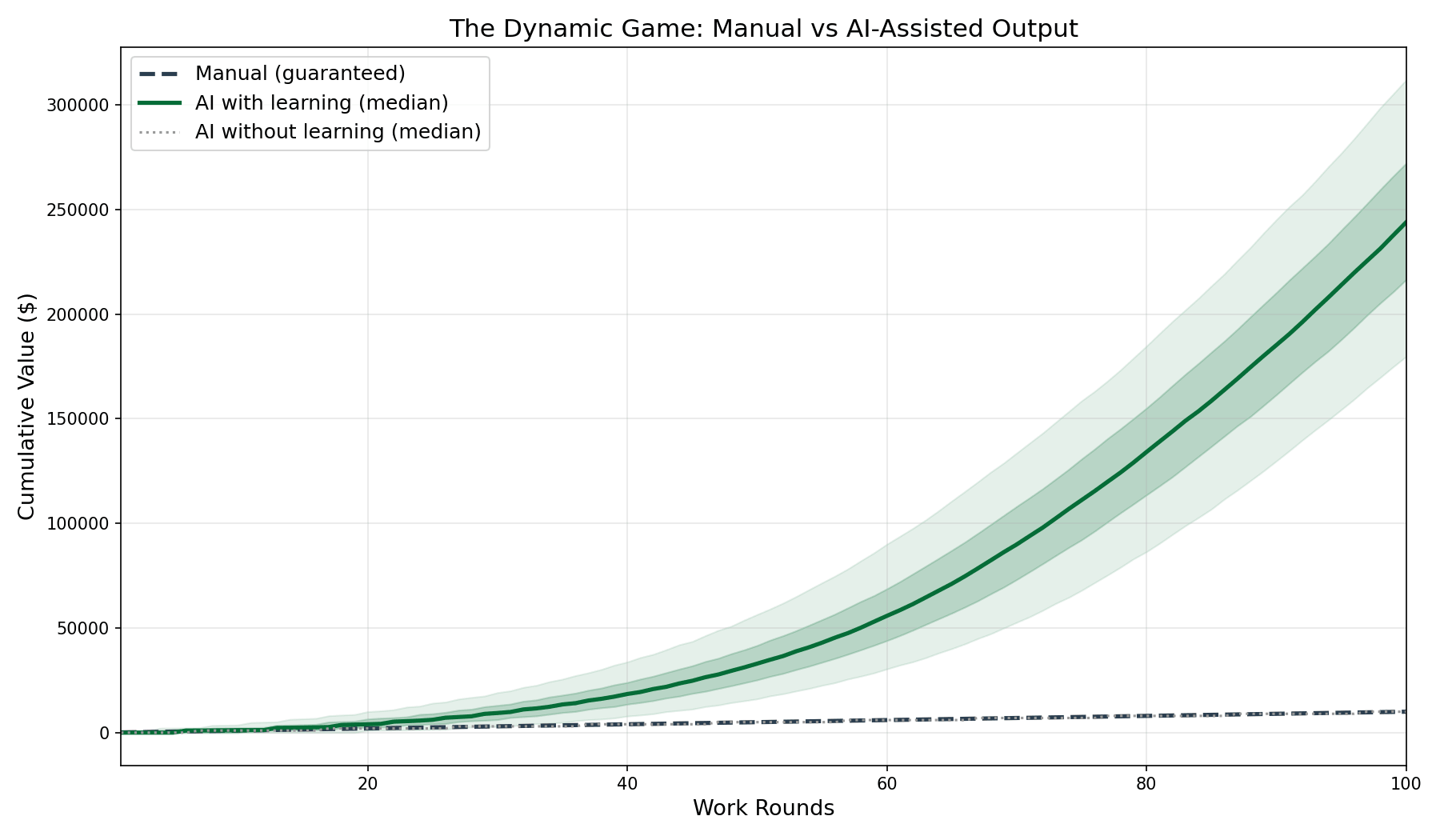

Now change one thing about the game. Make it repeatable. Round after round, the same choice. And with each round you play, the probability ticks upward and the payoff grows. Round one: 10% chance at $1,000. Round twenty: 25% chance at $2,000. Round fifty: 45% chance at $3,500. The guaranteed $100 stays the same.

That is the AI adoption decision. And the developer who keeps taking the $100 is walking into a trap that compounds.

The Trap Has Five Walls

Most analysis of AI adoption treats it as one problem. It is not. It is five problems operating simultaneously, each at a different level of the brain and the organization. Address any one of them in isolation and the other four keep the trap closed.

Wall one: the threat response. Before a developer evaluates AI, their brain has often already classified it as a threat. Identity threat ("I am a craftsman, not a prompt engineer"), competence threat ("my 15 years of expertise are devalued"), status threat ("juniors will outperform me"). These threat signals can shut down deliberate evaluation before it starts. The conclusion arrives pre-packaged. The rest is confirmation. This is not weakness. It is the brain's threat detection system working as designed, applied to a situation where it produces the wrong answer.

Wall two: the perception trap. Even developers who get past the threat response and actually try AI face a perceptual distortion. Under conservative modeling assumptions, 67% of individual rounds produce zero visible output. Two thirds of the time, the developer has nothing to show. Kahneman's loss aversion makes each of these zero-output rounds feel 2.25 times as painful as a productive round feels good. The cumulative experience feels overwhelmingly negative. The developer quits. They were on track to produce 7.5 times their manual output by round 100. Their perception said otherwise, and perception won.

Wall three: the static game fallacy. Developers who push through the perceptual distortion still face a framing problem. They evaluate AI as a single decision: adopt or do not adopt. Calculate expected value, compare to manual, choose. Static analysis of a dynamic system. The game changes as you play it. Probability climbs with every round because you learn: better specifications, better problem decomposition, better judgment about what to delegate. Payoff grows because you discover applications you would never have attempted. A Monte Carlo simulation across 10,000 trajectories confirms: even under conservative assumptions, the AI developer produces 7.5 times the cumulative value. Adversarial testing across 15 hostile scenarios and a 72-cell parameter grid broke the model exactly once, under four simultaneous extreme conditions. For normal software development, the math holds under every realistic scenario.

Wall four: the structural trap. The math only works if the developer can absorb the learning period. A developer with two weeks of managerial slack produces $72,055 of net value. A developer with one week of tolerance produces $2,320. Factor of 31. Same person, same tools, different floor. This mirrors the structure of financial poverty traps: the people who most need the upside are the least able to afford the path to it. The difference is not ability. It is whether the environment provides enough runway for the math to take over. The minimum viable investment is 7 rounds. The cost is not dramatic. The barrier is the two weeks of visible underperformance that most measurement systems punish.

Wall five: the culture tax. Organizations amplify or dampen every wall above through their failure tolerance. A company that says "fail fast" but measures weekly sprint velocity sends a signal that every developer reads correctly: do not experiment. The effective burn budget drops to near-zero regardless of the company's actual resources. The result: 3% of available AI value captured instead of 100%. That delta, multiplied across the engineering organization, is the Culture Tax. It compounds quarterly because risk-averse culture produces low AI adoption, which produces competitive gaps, which produces pressure on results, which produces more risk aversion.

The Historical Frame

Every major tool revolution follows the same pattern. The tools advance. The people and processes lag. The lag costs generations of painful adaptation.

The steam engine arrived in 1769. The first child labor laws came 64 years later. Scientific management theory came 142 years later. Workplace safety regulations matured over 150 years. The floor that made the transition survivable for workers was built after the fact, slowly, at enormous human cost.

We have something the Industrial Revolution did not. We have behavioral economics that explains loss aversion. Organizational psychology that maps psychological safety. Change management frameworks that structure transitions. Professional coaching methodologies that develop people through change.

The question is not whether the people and processes will catch up to the AI tools. They always do. The question is whether we compress this to five years intentionally or fifty years accidentally.

The Paradigm Problem

There is a subtler trap beneath the five walls. The developer who evaluates AI as a tool for writing code faster has asked the wrong question. That is asking for a faster horse.

AI does not make the old job faster. It makes a different job possible. The payoff growth in the model is not "same feature, fewer hours." It is "categories of work that were previously outside one developer's capability range." The manual developer has a fixed ceiling built from hours in the day and keystrokes per minute. The AI developer has a ceiling that climbs because both probability and payoff shift upward with practice. By the time the gap is visible, it is already large.

This connects to something I wrote about in Reading the Reader: AI did not eliminate software engineering. It x-rayed it. The visible layer was typing. The actual job was interpretation. AI made the implementation layer cheap and exposed the real structure of the work. The developer who uses AI to type faster is optimizing the wrong layer. The developer who uses AI to spend more cognitive cycles on interpretation, architecture and customer understanding is working on the layer that always mattered.

What This Argument Can and Cannot Do

This article series is a rational argument. It is built on mathematical modeling, behavioral economics and organizational analysis. If your resistance to AI adoption is rational, based on evidence you have gathered and evaluated, this argument might change your mind.

If your resistance is pre-rational, if it lives in the threat response before evaluation begins, or in the perception that distorts the experience, or in the structural constraints that make experimentation unaffordable, then this argument does something different. It does not convince. It provides a framework for understanding what is happening and where the leverage points are.

The five walls of the trap are not equally addressable by a blog post. The math can be shown. The perception distortion can be named. The structural and cultural interventions can be specified. But the threat response, the wall that prevents evaluation from starting, requires something a rational argument cannot provide: enough safety to put the threat aside and look at what is actually in front of you. That is a different kind of work. It is not less important than the math. It is the precondition for the math to matter.

The Hundred Dollar Trap

The trap is choosing the guaranteed small outcome because the path to the large outcome requires tolerating a period of visible failure. The period is short. The cost is small. The alternative is falling behind a curve that accelerates every quarter.

The trap has five walls. Each wall operates at a different level: neurological, perceptual, rational, structural, organizational. Each wall has a specific mechanism and a specific intervention. Breaking through any one wall while the others remain intact leaves the trap closed.

We know the mechanism of each wall. We know the cost of the trap. We know the cost of breaking out. We have the tools to address all five walls simultaneously, if we choose to.

The safe choice is the dangerous one.

Navigating AI Adoption in Your Organization?

The math is clear. The people and culture side is harder. If your team is stuck between AI resistance and mandate fatigue, a conversation might help.

Book a Free Consultation →