The Math Behind AI Adoption and Why It Survives Every Objection

I built a Monte Carlo simulation of AI adoption. 10,000 developers, 100 work rounds each. Conservative assumptions: half the learning rate an optimist would choose, 60% ceiling on AI reliability, API costs deducted every round whether you succeed or not.

Then I tried to break it. Fifteen adversarial scenarios. A 72-cell parameter grid designed to find the conditions where AI adoption is a bad bet.

I found one. It requires four hostile conditions simultaneously. For everyone else, the math is not close.

Yes, AI helped build this analysis. The model, the stress tests, the visualizations. I did it in an afternoon. That is the point.

The Setup

Consider two developers working side by side. Same company, same role, same codebase.

Developer A works manually. Every round produces $100 of value. A round is roughly a week of focused work on one meaningful unit: a feature, a module, a significant bug fix. Guaranteed output. No variance. Flat.

Developer B works with AI. Round one: 10% chance of producing $1,000, 90% chance of producing nothing. Expected value: $100. Identical to Developer A on day one.

The question is what happens over 100 rounds.

If the game were static, the answer would be: same expected value, higher variance, more stress. No rational reason to prefer B.

But the game is not static. Developer B learns. Every round, success or failure, shifts two parameters:

Probability climbs. Better prompts, better problem decomposition, better judgment about what to delegate. Round one: 10%. Round twenty: maybe 25%. Round fifty: maybe 45%. These numbers come from the conservative model, not from optimism.

Payoff grows. As skill develops, the developer discovers applications they would never have attempted without AI. The scope of what is possible expands. Round one payoff: $1,000. Round fifty: $3,500. Not because the same task got more valuable, but because the developer is now attempting tasks that did not exist in their manual repertoire.

Developer A's round fifty looks exactly like round one. $100. Same ceiling, same floor.

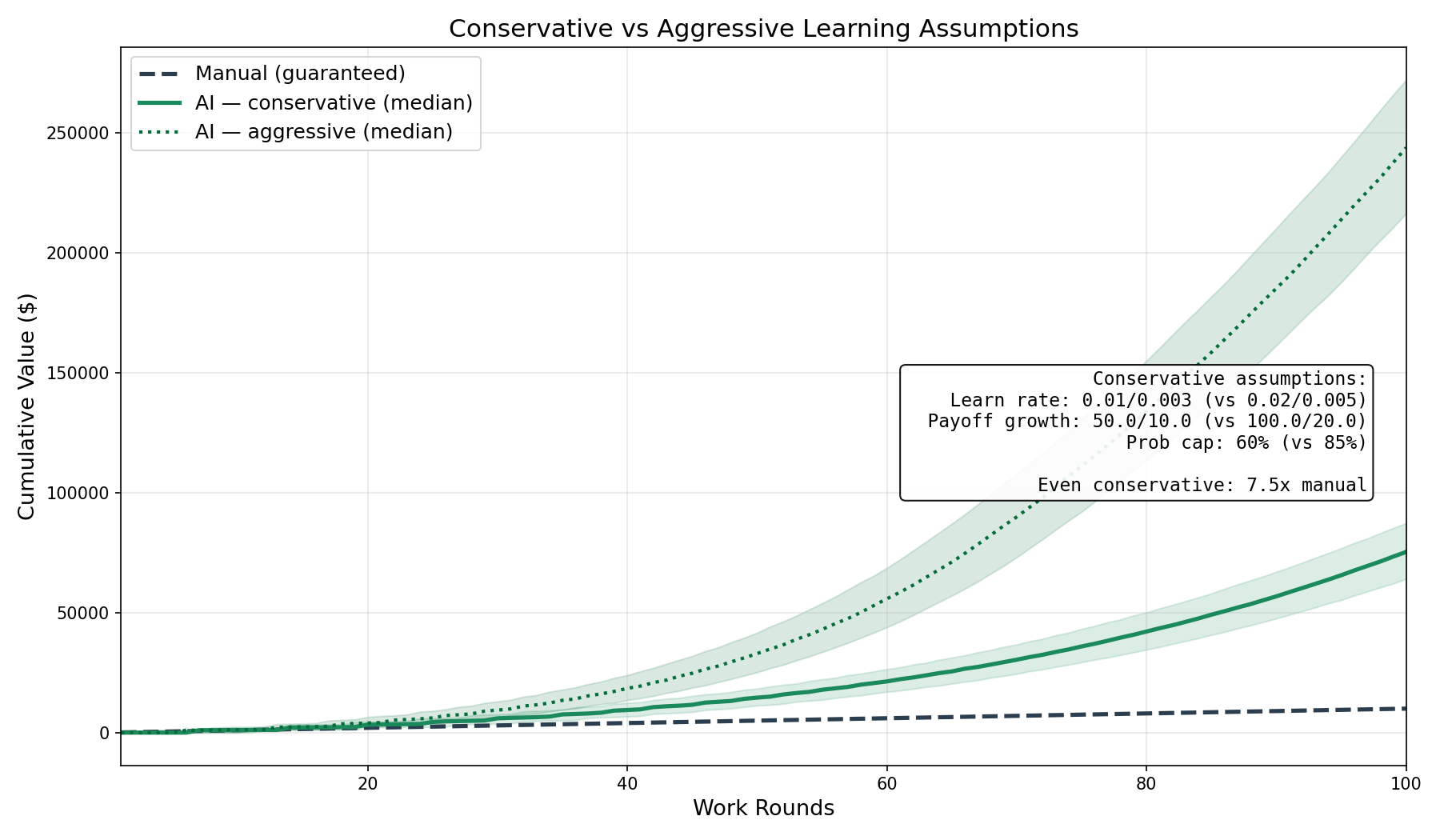

What the Conservative Model Shows

The aggressive version of this model produces dramatic numbers. I will skip it because dramatic numbers invite suspicion. Here is the conservative version: learning rates halved, probability capped at 60% instead of 85%, payoff growth cut in half.

Per-round expected value by round 100: $1,986 for the AI developer. $100 for the manual developer. The AI developer's expected value per round is 20 times the manual baseline by the end, starting from exactly equal.

Cumulative value after 100 rounds: $75,330 for AI. $10,000 for manual. Factor of 7.5.

Crossover point: The round where AI cumulative value first exceeds manual. Median: round 7. Across 10,000 simulations, 100% cross over within 100 rounds. Not 99%. Not 99.9%. Every single simulation.

Even the unlucky ones win. The 25th percentile AI developer, the one with worse-than-average luck, still finishes above 5x the manual developer.

If the aggressive assumptions are right, the factor is 24x instead of 7.5x. But 7.5x is enough to make the argument. I do not need 24x.

What Does Not Break It

Here is where the argument earns its credibility. I did not just model favorable scenarios. I modeled hostile ones. Both sides have costs: the manual developer spends $75/round in time, netting $25. The AI developer spends $25 in time plus $10 in API costs. By round 31, those costs are less than 5% of the value the AI developer generates. The cost structure matters early and becomes irrelevant later.

Failures cost real money. What if a failed AI round does not just waste time but creates bugs that cost $75 to fix? Result: 26.7x. What about $150 per failure, a serious production incident? Result: 24.7x. Failure costs alone never break the model at conservative learning rates.

AI models change and your prompts break. What if every 10 rounds, a model update drops your success probability by 20%? Result: 6.5x. Most of what you learn transfers across model changes: how to decompose problems, what good output looks like, when to trust and when to verify. Prompt-level learning is fragile. Judgment-level learning is durable.

Only successes teach you anything. What if failure rounds contribute zero learning? Result: 7.4x. Slower progression, same direction.

You are a slow learner. What if your learning rate is one quarter of the already-conservative baseline? Result: 6.3x. At one tenth: 3.7x. The math works across a wide range of learning speeds.

You are an expert manual developer. What if the manual developer produces $200 per round instead of $100? Result: 7.2x. The gap is proportional, not absolute. Expert status does not insulate you from the divergence.

AI is weak in your domain. What if the probability caps at 20%? Result: 12.5x. Even a mediocre AI ceiling produces a large multiple because the ceiling still rises and the payoff still grows.

Learning has diminishing returns. S-curve instead of linear? Result: 11.9x. Early gains do most of the work. By the time diminishing returns kick in, the probability is already high enough to dominate.

Your vendor raises prices fivefold. Result: 95x vs 96x at stable pricing. The vendor captures 2% of the value even at quintuple pricing. Switch to a local model instead? Lower capability, lower probability. Still 57x. The capability you built is yours. The vendor rented you a tool.

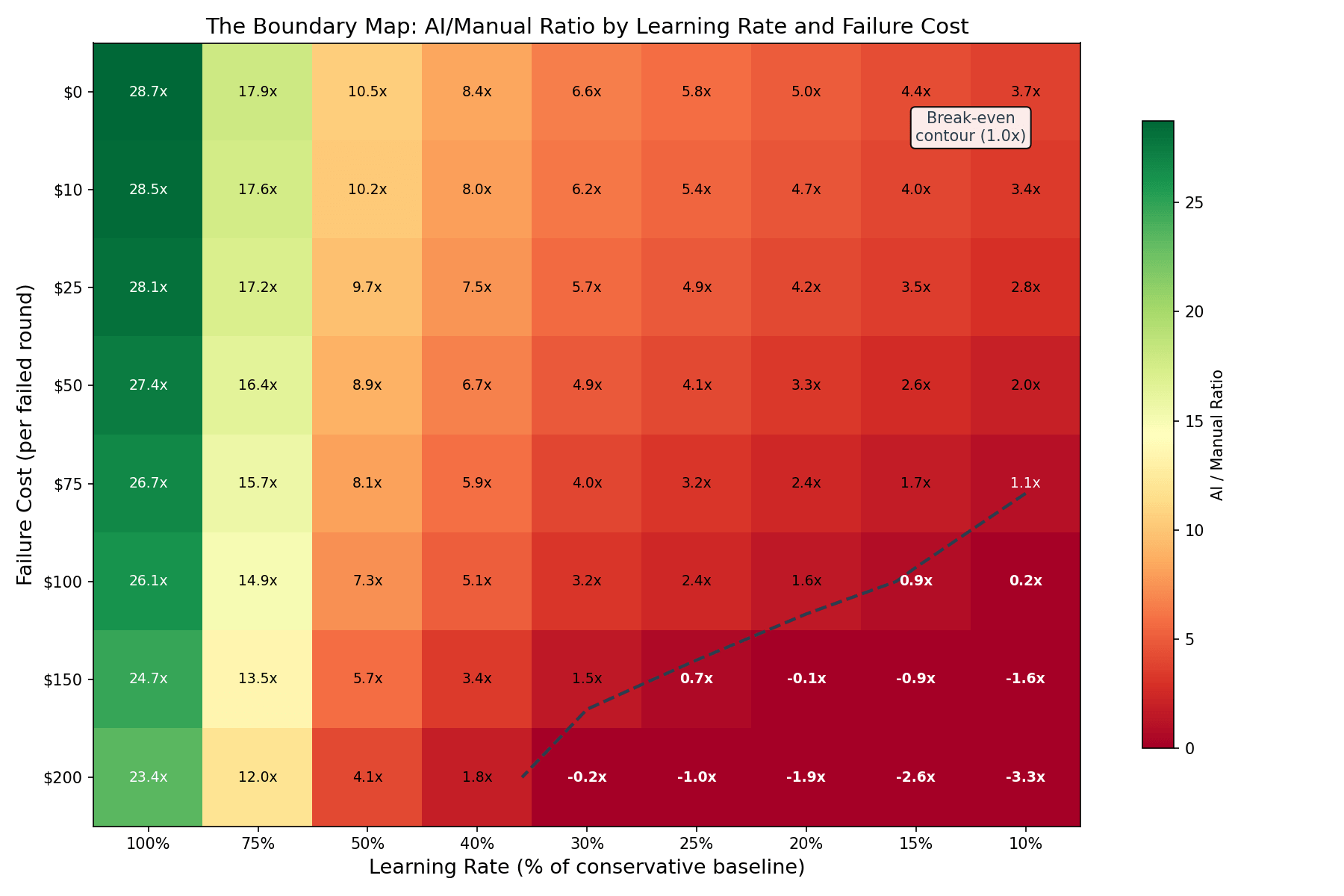

What Breaks It

One scenario out of fifteen.

To break the model, you need all four of these simultaneously: a very slow learner (one quarter of conservative learning rates), failures that cost $75 each, AI model churn every 15 rounds that drops your probability by 15%, and zero learning from failure. Under those combined conditions, the AI developer finishes at 0.12x the manual developer. A clear loss.

That scenario describes a developer who barely learns, whose tools constantly change, who ships bugs to production regularly, and who gains nothing from the experience of shipping bugs. If all four are true, AI adoption is the wrong call. It is also the smallest of their problems.

For everyone else: the boundary is a diagonal line across a grid of failure cost versus learning rate. At normal learning rates, even $200 per failure does not break the model. At very slow learning rates, failure costs above $100 start to matter. The red zone is narrow and describes a specific kind of work: safety-critical systems, regulated environments, domains where a shipped error costs orders of magnitude more than the manual alternative.

Most software development does not live in the red zone. Most software development lives in the green zone where the argument holds under every realistic adversarial condition I could construct.

What the Model Assumes

Honesty about assumptions matters more than the results.

The model assumes manual output is flat. In reality, manual developers improve with experience, probably around 0.5-1% per round. At 1% manual growth, the 7.5x advantage shrinks to roughly 5-6x. Meaningful reduction. Still a large multiple.

The model assumes learning happens. Not automatically, but as a consequence of paying attention. A developer who copies AI output without reading it is playing the static game: $10,000 cumulative, identical to manual. The learning curve in this model is earned through deliberate practice, not granted by tool access.

The model assumes binary outcomes: full success or full failure. Real work has partial successes. AI gets you 60% there and you finish the rest. This simplification actually makes the model harder on the AI case, not easier. Under continuous partial success, the variance drops and the consistent-value argument strengthens. Binary is the tougher test.

The model code is reproducible and the parameters are published alongside this article.

One More Dimension

The model captures probability and payoff. There is a third factor it does not fully model but that matters in practice: where the developer's thinking goes.

A manual developer spends most of their cognitive cycles on implementation. Typing, debugging, testing. A smaller fraction goes to strategic thinking: architecture, feature prioritization, understanding what the customer actually needs. A meaningful chunk burns on context switching between those two modes.

An AI-assisted developer shifts the ratio. Less time on implementation, more on specification and review, substantially more on strategic thinking. The exact numbers are rough estimates, not measurements. But the direction is consistent across every practitioner account I have seen: AI frees cognitive capacity from implementation and makes it available for higher-order work.

The caveat: freed capacity does not automatically flow to strategy. It can flow to meetings, Slack, or more AI prompting. The reallocation is an organizational design choice, not an automatic consequence.

The Dynamic

The manual developer has a fixed ceiling built from real constraints: hours in the day, keystrokes per minute, attention span. The AI developer has a ceiling that climbs with each round because both probability and payoff shift upward. It does not climb automatically. It climbs because the developer is learning.

By the time the gap between these two ceilings is visible, it is already large. By the time it is large, the manual developer cannot close it by switching, because the AI developer has dozens of rounds of accumulated learning that would need to be replicated from scratch.

That is the dynamic game. It compounds in both directions. And it penalizes waiting.

Navigating AI Adoption in Your Organization?

The math is clear. The people and culture side is harder. If your team is stuck between AI resistance and mandate fatigue, a conversation might help.

Book a Free Consultation →