Why AI Adoption Feels Like Losing Even When You Are Winning

A developer tries AI for a month. Here is what their internal log looks like.

Week one: spent three hours with an AI coding assistant on a feature. Got nothing usable. Went back and wrote it by hand in two hours. Net loss: three hours.

Week two: tried again on a different feature. The AI produced something structurally close but hallucinated the database schema. Spent an hour fixing it. Probably broke even compared to writing it from scratch, maybe lost 20 minutes.

Week three: AI nailed a utility function on the first try. Felt good. Then it generated a test suite that tested the wrong behavior. Scrapped the tests, wrote them manually.

Week four: solid output on a straightforward CRUD endpoint. The one genuinely good round in four weeks.

Four weeks. One clear win. One break-even. Two losses. The developer's honest assessment: "I gave it a fair shot. It is not for me."

That assessment is wrong. Not because the developer is lazy or closed-minded, but because human perception systematically distorts exactly this kind of experience. The developer had a winning month and it felt like a losing one. The distortion is well-documented. Daniel Kahneman won a Nobel Prize for mapping it. And it is operating on every developer who has tried AI and walked away.

The Hundred Dollar Question

Would you take $100 right now, or a 10% chance at $1,000?

Both options have the same expected value: $100. Mathematically interchangeable. But most people take the guaranteed money. Daniel Kahneman and Amos Tversky spent decades studying why, and what they found earned Kahneman a Nobel Prize in 2002.

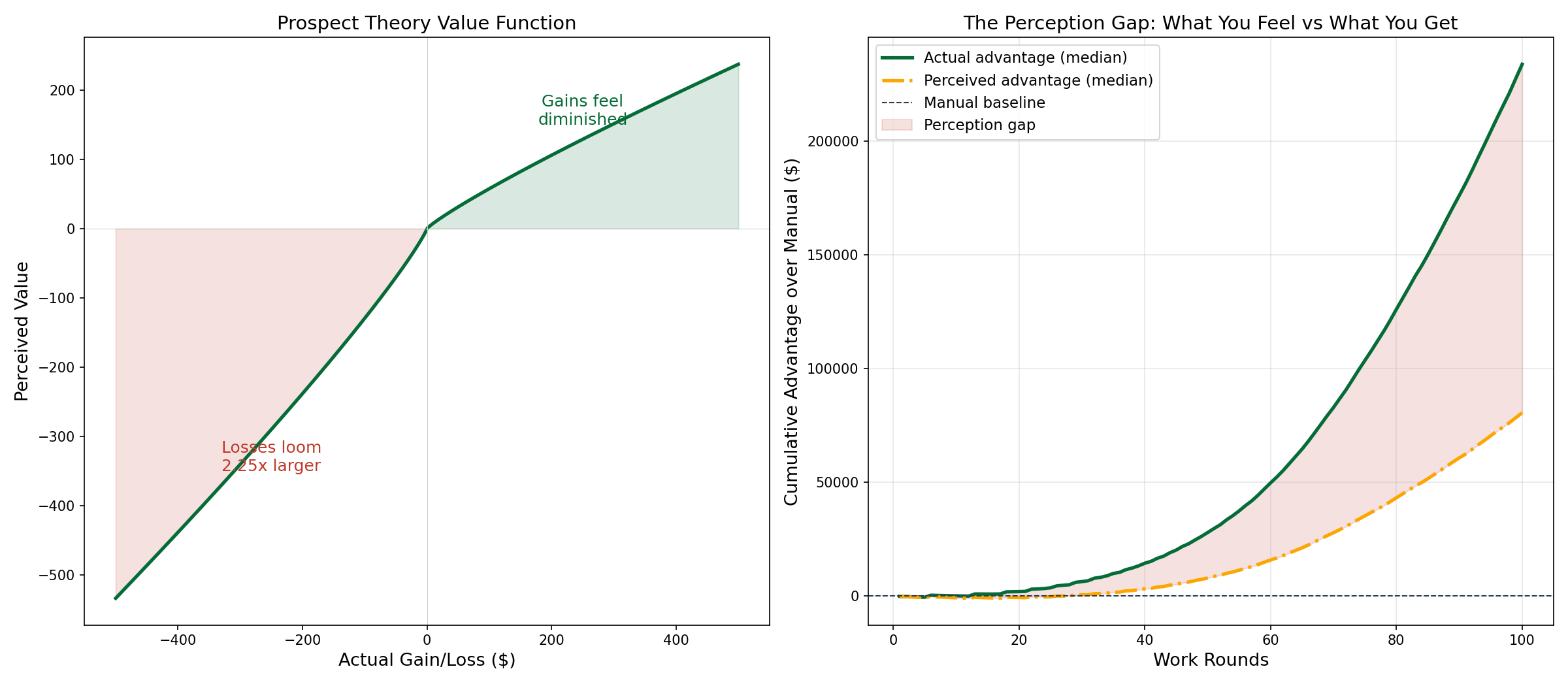

The short version: humans do not experience gains and losses symmetrically. A $100 loss feels roughly 2.25 times as painful as a $100 gain feels good. Losing $100 is not the opposite of gaining $100. It is more than twice as bad.

Loss aversion is not a flaw in human cognition. It is a feature that worked well for a very long time. In environments where a single bad outcome could kill you, overweighting losses keeps you alive. The ancestor who shrugged off a loss did not become an ancestor.

The problem is that this same wiring now processes a failed AI coding session. A wasted three-hour afternoon gets run through the same loss-amplification that evolved to protect you from losing food or shelter. Not at the same intensity, but through the same machinery. You feel the loss 2.25 times as hard as you feel an equivalent win.

Go back to the developer's month. One good week, one neutral week, two bad weeks. The rational ledger is roughly break-even, maybe slightly negative. The perceptual ledger is brutally negative, because the two bad weeks each landed with more than double the force of the one good week.

The developer did not evaluate AI and find it lacking. Their perception evaluated AI and found it threatening. Those are not the same thing.

Two Thirds of the Time It Feels Like Failure

This gets worse at scale.

I ran a Monte Carlo simulation. 10,000 developers, each working 100 rounds. A round is one meaningful work unit: a feature, a bug fix, a module. Value is measured as output produced relative to a manual baseline of $100 per round. Conservative assumptions throughout: learning rates halved from any optimistic estimate, AI reliability capped at 60%, costs deducted every round whether you succeed or not.

Under those conservative conditions, the AI-adopting developer produces 7.5 times the cumulative value of the manual developer by round 100. Not marginally better. Seven and a half times better.

But here is the number that explains every developer who tried AI and quit: 67% of individual rounds produce zero visible output. Two thirds of the time, the developer has nothing to show for the round. They spent the time, paid the cost, got nothing. The other third, they got something big enough to more than compensate. But human perception does not work on cumulative value. It works on the last thing that happened.

Kahneman's loss aversion coefficient is 2.25. Apply it: each of those zero-output rounds hits 2.25 times harder than an equivalent productive round. Run the math and the developer's perceived experience stays near zero or negative for the first 40 to 50 rounds, even while their actual cumulative value is climbing steeply.

You are winning and it feels like losing. Not occasionally. For the majority of the experience.

The developer who quit at week four was on schedule. Their trajectory was pointed upward. Their perception said otherwise, and perception won.

The Game Is Not What You Think It Is

The standard objection: "Fine, but those are still bad odds. I would rather have $100 guaranteed than flip a coin that comes up empty 67% of the time."

Fair enough, if the coin stays the same. It does not.

This is where most thinking about AI adoption goes wrong. People evaluate it as a single decision: adopt or do not adopt. Calculate expected value, compare to manual, choose. Static analysis of a dynamic system.

The game changes as you play it. Two parameters shift with every round.

The first is probability. You get better at working with AI. Better prompts, better problem decomposition, better judgment about what to delegate and what to keep. Each round teaches you something about the tool, whether the round succeeded or not. A failed round where you learned why it failed is not a wasted round. It is a round that moved your probability from 15% to 16%.

The second is payoff. As your skill grows, you start seeing applications you could not have imagined at the beginning. The developer who starts with "can AI write this function?" eventually gets to "can AI generate the test harness and the deployment configuration and the monitoring setup?" The scope of what you can attempt grows. The payoff when you succeed grows with it.

Manual work does not have this property. The developer writing code by hand in month 12 is doing roughly the same work at roughly the same speed as in month one. They might be slightly more experienced, but the ceiling is the ceiling. There are only so many hours and so many keystrokes.

The AI developer in month 12 is playing a fundamentally different game than the one they played in month one. Higher probability of success. Larger payoff when they succeed. The developer who quit at week four was comparing the wrong version of the game to the wrong baseline. They evaluated version 1.0 of themselves against the fully mature version of manual work and concluded AI loses. They never saw version 3.0 of themselves.

The Faster Horse

There is a subtler version of this mismatch. The developer who uses AI to write functions faster has made a faster horse. Same job, same output, same measurement. The speedup is real but bounded.

The developer who uses AI to rethink what they build, how they specify it, what is possible given tools they did not have before, has done something different. They are not doing the same job faster. They are doing a different job. The payoff growth in the model is not "write code 10x faster." It is "attempt projects that were previously outside your capability range." That category of value does not exist in the manual baseline. You cannot reach it by typing faster.

The developer who quit at week four was measuring AI against the old job. The question is whether it performs the new one.

The Diary They Never Wrote

Go back to the developer's log. Weeks one through four are real data. Here is what the model suggests weeks five through twelve might have looked like, based on 10,000 simulated trajectories at the same learning rate.

Week five: still mostly not working. But the complete failures are getting rarer. One task produces a usable draft after editing. Hard to tell if that is progress or luck. Week seven: the developer notices they are framing problems differently before they start. Tighter specifications. Smaller scopes. Not sure when that changed. Week nine: a routine task finishes in two hours instead of a day. The developer is not sure the AI deserved the credit, but they would not have structured the work that way without it. Week twelve: still failing on complex tasks. But the failures produce rough drafts instead of garbage. The floor moved. Quietly.

None of that happened. The developer was not there to see it. They had four weeks of data, their perception scored it as failure, and they walked away from a trajectory that was already bending upward.

AI adoption does not fail because it is too hard. It fails because it looks too hard during the exact period when it is working. Weeks one through four are genuinely difficult. They are also not representative of weeks five through fifty. The perceptual system scoring the experience is optimized for detecting threats, not for tracking cumulative value. In a survival context that is exactly what you want. In a learning context it will talk you out of the best investment you could make.

The developer who stays in the game long enough for the math to take over does not need to believe AI is great. They need to distrust their own scoreboard for about eight weeks. That is a smaller ask than it sounds. And a harder one than it looks.

Navigating AI Adoption in Your Organization?

The math is clear. The people and culture side is harder. If your team is stuck between AI resistance and mandate fatigue, a conversation might help.

Book a Free Consultation →