Why Your Brain Won’t Let You Evaluate AI

If you have already made up your mind about AI, the interesting question is not what you decided. It is when you decided. And whether you decided at all, or whether something faster than conscious thought got there first.

The Evaluation That Never Happened

Picture a team meeting. Someone demos an AI-assisted coding workflow. A senior developer watches. Within seconds, their chest tightens. A thought arrives fully formed: "That will never work for real code." They spend the rest of the demo scanning for the flaw. They find one. They feel right.

The meeting ends. The senior developer has "evaluated" AI and found it wanting. Except they did not evaluate anything. The conclusion arrived before the evidence. The rest was confirmation.

This is not a character flaw. It is a specific, identifiable cognitive event. And intelligence does not protect you from it. If anything, intelligence makes it worse: the better your analytical skills, the more efficiently you can build a case for a conclusion your body already reached.

Some AI skepticism is well-reasoned. A developer who has tested AI tools on real workloads, measured the output quality, tracked the time investment and concluded the return is not there yet has done the work. Slow, deliberate, evidence-based. This piece is not about that person.

This piece is about the developer whose conclusion arrived in the first 30 seconds and spent the rest of the meeting building a case around it. That is a different cognitive event entirely. And it is far more common than the first one.

Five Signals You Are Not in Evaluation Mode

The tricky part is that a threat response feels identical to a rational conclusion from the inside. Both produce certainty. Both feel earned. The difference is in what produced them.

Here are five patterns. If you recognize yourself in any of them, that is useful information, not a judgment.

You are defending identity, not evaluating capability. "I am a craftsman, not a prompt engineer." The objection is not about what AI does. It is about what using AI would make you. The evaluation is running against your self-concept, not against the tool's output. The tell: you are measuring AI against who you are, not what it does.

You are protecting a sunk cost. "I spent 15 years mastering this." The argument centers on your investment, not the outcome. Fifteen years of expertise is genuinely valuable. But whether AI reduces the return on that expertise is a question about the market, not about your history. The tell: the argument is about what you put in, not what you would get out.

You are worried about hierarchy, not quality. "Juniors with AI will outperform me." This is a status threat, not a quality concern. If a junior engineer produces better output with AI assistance, the output is still better. The question it raises is about your relative position, not about whether the work improved. The tell: resentment toward people who adopted early.

Your objections match your team's objections exactly. If your skepticism mirrors your peer group's skepticism word for word, you may be processing social pressure rather than evidence. Belonging is a powerful driver. Disagreeing with your team about something this charged carries real social cost. The tell: you have never seriously considered AI when alone, only confirmed your position when surrounded by agreement.

Every conversation ends at job security. "AI will replace me." The big one. Existential anxiety compressed into tactical objections about hallucinations and code quality. The underlying fear is survival. Everything else is a proxy. The tell: no amount of evidence about AI's current limitations makes the unease go away, because the objection was never about current limitations.

None of these signals mean your conclusion is wrong. AI might genuinely be the wrong call for your situation. But if the conclusion was produced by threat rather than evaluation, you do not actually know that yet.

Why This Happens and Why It Is Not Weakness

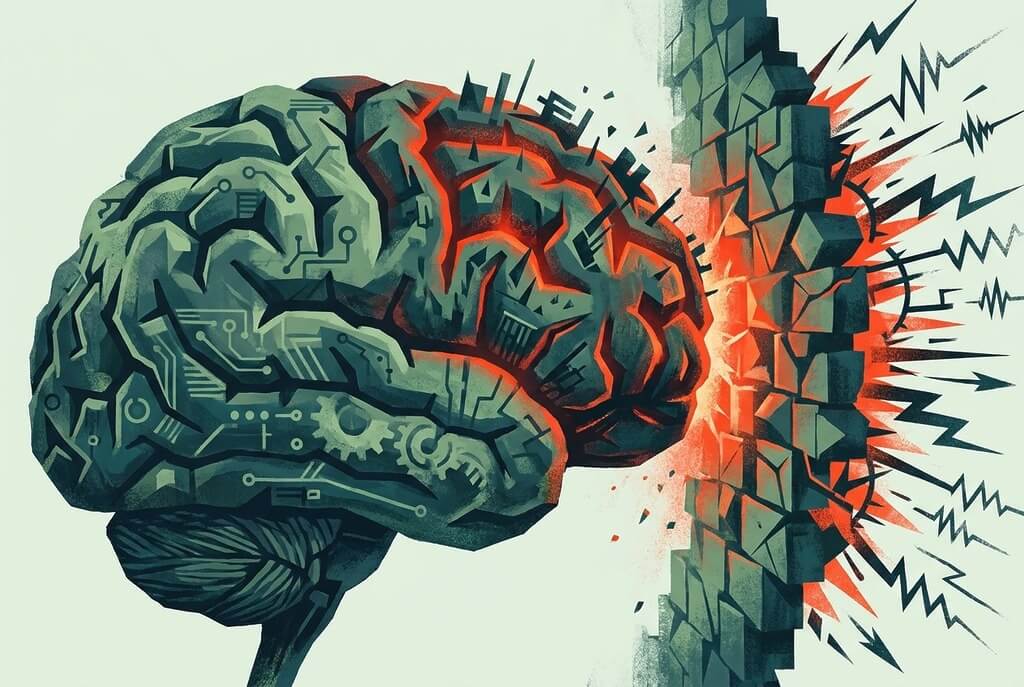

The brain has multiple processing modes. Two matter here.

Threat mode is fast, binary and protective. It classifies inputs as safe or dangerous and acts before conscious thought catches up. It exists because ancestors who paused to carefully evaluate whether that rustling in the grass was a predator did not become ancestors. Speed beats accuracy when the cost of a wrong negative is death.

Deliberate mode is slow, analytical and evidence-based. It weighs options, considers tradeoffs, updates beliefs on new information. It produces better decisions. It also takes time that the threat system does not grant when it believes survival is at stake.

The critical point: threat mode does not just bias your evaluation. It prevents evaluation from starting. The deliberate system does not get a turn. The feeling of certainty arrives pre-packaged. The developer in the meeting did not evaluate and reject AI. They never evaluated at all.

"Survival" in a professional context is not physical death. It is identity death, competence death, status death. The brain does not distinguish well between the two. A threat to your professional identity activates the same protective machinery as a threat to your physical safety. The stakes are obviously different. The speed of the response is not.

The Pattern History Already Showed Us

The Luddites were skilled textile workers. Their livelihoods were genuinely threatened by mechanized looms. History calls them wrong, and over a 50-year timeline, they were. But their threat response was correct. The threat was real. Their jobs did disappear.

What they missed: new work appeared alongside the destruction. Not immediately. Not evenly. But the workers who started learning the new machines instead of breaking them found themselves ahead of the workers who did not. The differentiator was not fearlessness. Most of them were afraid too. The differentiator was whether the fear stayed in charge long enough to prevent them from examining what was actually in front of them.

The parallel cuts both ways, and anyone who uses the Luddite story as a simple morality tale is misreading it. The Luddites were right that the transition would be painful. Telling a textile worker in 1812 to "just retrain" was useless advice. The retraining infrastructure did not exist yet. The social safety net did not exist yet. The new jobs had not all been created yet.

AI skeptics in 2026 are in a similar position. The threat to certain kinds of coding work is real. The new work that replaces it is still being defined. The transition will be painful for some people. All of that is true.

What is also true: the difference between the Luddites and developers in 2026 is not the size of the threat. It is that we now understand the mechanism. We know why the threat response fires. We know what it does to evaluation. We can see it while it is happening. That does not make it easy to override. But it makes it possible to notice.

Moving from Threat to Evaluation

Name the response. "I notice I have a strong position that arrived before I looked at evidence." The response exists regardless of whether you name it. Naming it gives you something to work with instead of something working you.

Separate signal from noise. Some objections are about AI's actual limitations. Hallucinations are real. Quality inconsistency is real. Those are legitimate engineering concerns. Other objections are about what AI means for your identity, your status, your security. Those are legitimate human concerns. They are not engineering concerns. Mixing them guarantees a bad engineering decision aimed at solving a human problem.

Run a bounded experiment with zero stakes. Not "adopt AI." Not "bet your next sprint on it." Pick a side project that does not matter. A tool nobody is waiting for. A prototype that will never ship. Give yourself five hours with no consequence attached. The point is not to prove AI works. The point is to find out whether you can evaluate it when the threat response is not running the show.

Track the process, not the outcome. After the experiment, do not ask "did AI produce good code?" Ask "did I actually evaluate it, or did I find the first flaw and stop?" The outcome of a five-hour experiment tells you almost nothing about AI's long-term value. The process tells you something about your own.

The One Question

This piece does not argue that AI is right for you. That is a question that deserves a real answer from a real evaluation. You will not get to that answer while your brain is treating the question as a survival threat.

So: have you evaluated, or have you reacted?

If you have evaluated and decided against, you have done the work. Your skepticism is earned.

If your position arrived fully formed and has never been seriously tested, you owe yourself five hours and one honest question. Not for AI's sake. For yours.

Navigating AI Adoption in Your Organization?

The math is clear. The people and culture side is harder. If your team is stuck between AI resistance and mandate fatigue, a conversation might help.

Book a Free Consultation →